Updated: 3/18/2024

GA4 bot traffic skews website data, pollutes analytics, and ruins otherwise useful metrics. Because of that, you are going to need to prioritize bot filtering in your Google Analytics 4 implementation.

Bot traffic in GA4 has proven to be an entirely different beast as compared to it’s predecessor Universal Analytics. GA4 touts machine-learning algorithms combined with manual input from Google’s engineering team as their one-size-fits-all bot filter.

While this is a powerful tool as a foundation and may be improved upon in the future, there have been many analysts and data scientists coming out of the woodwork to prove that the default filter is far from perfect out-of-the-box.

Below, we’re going to be looking at bot filtering in GA4 using the format of the scientific method. We’ll get to:

- Understand the problem

- Ask the right question

- Test our hypothesis (through a case study we’ve done here at DOM)

This is where we should put a spoiler alert, because we’re all here for a reason, right?

What Is Bot Traffic In Google Analytics 4?

Bot traffic is a data-analytics-specific problem. You may have heard that most bot traffic is not actually malicious in nature and you may even have members of your data team that use bots to scrape the web for useful information.

While it’s true that these bots don’t pose a threat to your cybersecurity, the problem is that these bots will severely skew and bloat your website data, pollute your analytics, and ruin otherwise-useful metrics.

Before getting into the most common types of bot traffic, it’s important to understand the three key components of bots.

- Bots are scripts

- Scripts are a set of programmable instructions

- Scripts are most easily run on Virtual Machines

With these key components being taken into consideration, we can begin to look at the types of bots most often encountered in analytics and get to the question we all ideally would like to answer…

Is there a way to identify and filter out bot traffic to a statistically insignificant percentage of sessions?

Common Google Analytics Bot Traffic Type #1: Web Scrapers

Bot is a very open-ended term. Most traffic noise comes from web scrapers, which provide an automated, scalable solution for access to structured web data. You can think of web scrapers like the programming version of RegEx.

Any structured data on the web can be pulled into a spreadsheet at the speed of light. These bots are incredibly useful for lead generation, brand monitoring, company data, market analysis, and basically anywhere that publicly available data is useful.

According to the 9th Annual Bad Bot Report from Imperva, Selenium is the most popular tool used for web scraping. Imperva estimates that web scrapers like Selenium may be responsible for as much as 40-60% of website traffic depending on your industry. This might account for why some websites have such high traffic and yet lackluster conversion rate metrics.

If you’ve ever run into the issue where you’re getting valid-looking leads generated from your form submissions, only to follow up with them and they say they’ve never heard of you and never filled anything out, this bot is the reason.

Want some more fun facts about bot scripts?

- Web scrapers can have as little as three lines of code.

- Most web scrapers save web pages and their data to a file, which takes anywhere from 3-8 seconds.

These facts might lead you to believe that all bots should fall into an average session duration of fewer than two seconds. However most web scrapers are more complex than just looking through a website.

Common Google Analytics Bot Traffic Type #2: Form Fillers

Form Fillers do as the name implies, they fill out the forms on your website in the hopes their spam tricks people into scams. If you’ve ever run into the issue where you’re getting valid-looking leads generated from your form submissions, only to follow up with them and they say they’ve never heard of you and never filled anything out, this bot is the reason.

Form fillers are similar to email phishing scams. These bots fill forms automatically using a series of logic statements. They often use stolen user data to appear legitimate. Even though they have people’s real information, they should be treated like all other bot traffic and be filtered out, at the very least, like a non-qualified lead.

Form fillers use a combination of Python – the most popular programming language for making bots – and JavaScript to execute predetermined scripts. While times vary, some form fillers can take less than 2 seconds to fill out a form.

With such a wide variation on the main metrics of web scrapers and form fillers, we need to look at other variables that we can combine average session duration with in order to identify bot traffic and filter it out of GA4.

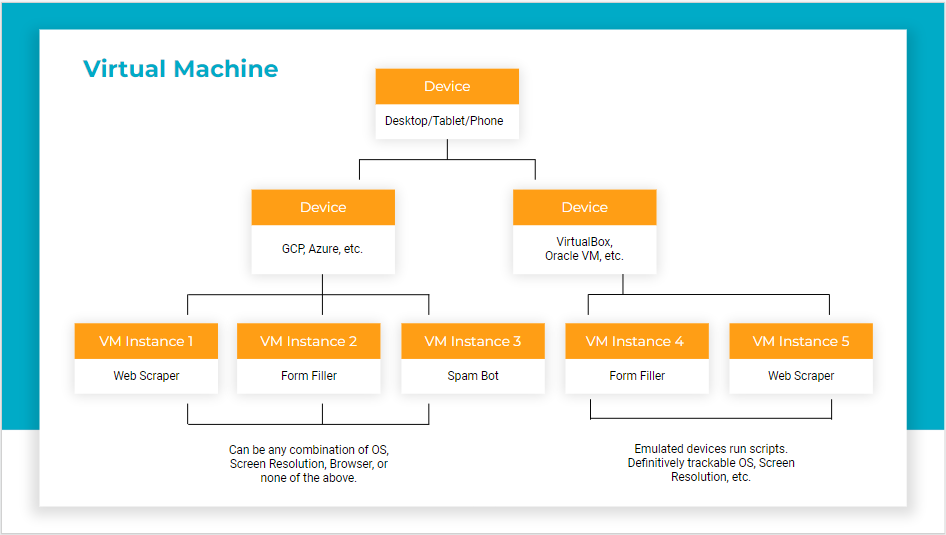

What Is A Virtual Machine?

A virtual machine (VM) is software that allows for the emulation of a whole computer or device.

VMs are usable on local disk or on the cloud. They can run as a desktop program or as a mobile app.

VMs are the best way to run scripts regularly. Saying that, there are several limitations to virtual machines. After all, but your computer was only designed to run one computer, your own. That means that limitation have to be set in order to successfully run Virtual Machines. including:

- Running a whole device. Most devices are built to run only its local, intended software. Emulating other devices, from phones to computers, in one device means that limitations have to be set in order to successfully run VMs.

- Operating System Versions. VMs use old OS versions because they take up less computing power and take time to be cracked and emulateable.

- Screen Resolution. Screen resolutions are pre-set and minimally adjustable to ensure consistency of emulation. Common default and maximum sizes include 800×600, 1360×768, 1140×900, and 1600×1200.

What Is Screen Resolution In Google Analytics (GA4)?

On a typical computer or device, this is the resolution of the monitor or built-in screen. This dimension is based on hardware. On a typical computer or device, like the ones you’re using now, the resolution of the monitor or built-in screen will be captured by GA4’s Screen Resolution metric.

If the majority of bots are using Virtual Machines, and Screen Resolution measures the resolution of your physical device, what happens when the device is software?

This leads us to our hypothesis (thank you for your patience).

Our Hypothesis For Bot Filtering In Google Analytics (GA4)

Bots can be identified through their Screen Resolution because bots use virtual machines that have pre-set and/or strange screen resolutions that stand out in analytics reports.

These suspect users are verifiable through Average Engagement time, OS Version, and various other dimensions due to the fast executable run time of scripts and the technical limitations of Virtual Machines.

But that isn’t enough to definitively say that we can filter out these users from our data reports.

So… these suspect users are verifiable through:

- Average Engagement Time

- OS Version

- Various other dimensions

Due to the fast executable run time of scripts and the technical limitations of Virtual Machines.

Results and Supporting Dimensions

To test our hypothesis, we ran a case study on a website that uses GA4 out-of-the-box to see what we could find.

All of our data came from a three-month period.

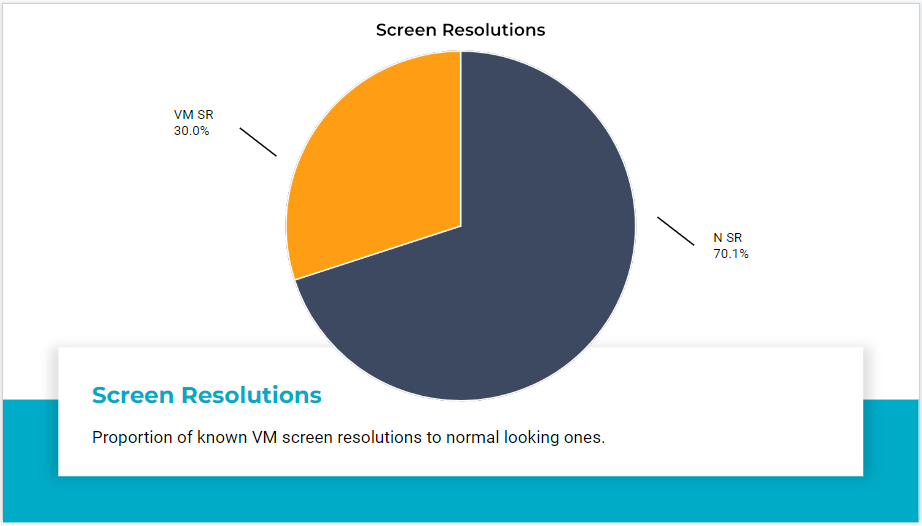

Traffic Using Virtual Machine Pre-Sets

Firstly, we found that 30% of their traffic belonged to screen resolutions that are common Virtual Machine pre-sets that are also not typically found as real-world device resolutions.

Next, we’ll look at User Interaction.

User Interactions In Google Analytics

In the top right corner there, you’ll see that the average user with those screen resolutions was on the page for zero seconds but did about 4 things.

If you know a person that can do 4 things in literally no time flat, you let us know.

They’re probably a world record holder, or they would be… except there were 4,000 “people” across these two screen resolutions alone that could do it, too.

The only other thing that I’ll point out is the black-color-coded cell over on the left. This screen resolution, a resolution that doesn’t exist as a real physical screen, represented 40% of all form fills for this website.

40% of their leads were useless because they didn’t have a robust bot filter and they just used GA4 out of the box.

Even though we aren’t going over all the details, this data certainly supports our hypothesis.

- 0% of screen resolutions under 8 seconds of average engagement time were a current OS version

- Mobile users make up 16% of users and 59.5% of [Screen Resolutions + OS] under 1 second

- Devices listed don’t match a real-life screen resolution

- Many devices aren’t listed because JavaScript isn’t able to automatically pull the information

- 98.96% of users under 8 seconds have no device set/detected

How To Set Up Bot Filtering In Google Analytics 4 (With Confidence)

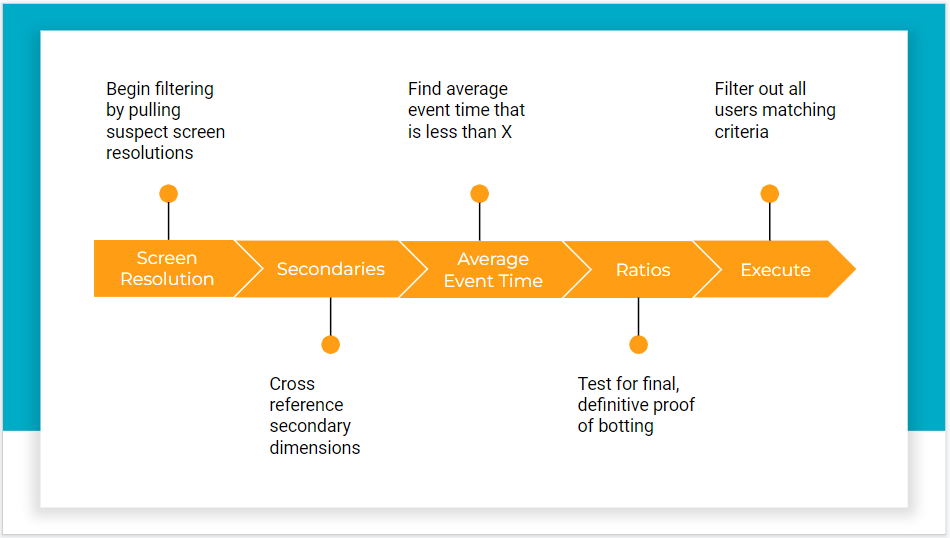

In order to confidently filter bots in GA4, you need to build a layered logic sequence. To spell it out plainly, your bot filter needs to audit traffic with a series of tests. If one statement turns out to be true, then it needs to pass on to the next statement, and so on.

How To Filter Bot Traffic In GA4?

Here’s a brief overview of the 5-step process for filtering bots in GA4.

- Begin bot filtering in Google Analytics 4 by pulling suspect screen resolutions

- Cross reference secondary dimensions – does the screen resolution combine with an old operating system?

- Find average event time that is less than 8 seconds

- Test for final, definitive proof before botting using different metrics found in GA4

- Filter out all users matching criteria

These combinations can be saved as custom metrics in GA4. In addition to that, they are such a powerful tool if you need a reason to compile a great argument for a custom GA4 migration.

Any other questions about bot filtering in GA4? Don’t hesitate to contact us.