Imagine for a second you’re manning an oil well. You most likely have little in common with someone managing oil drilling production. But for the sake of our point, play along.

You understand the core competency of your role. You need to make sure the well is producing adequate oil. The oil generated from the well creates profit for the company. One barrel of crude oil rakes in $60 from buyers worldwide.

You’re producing oil. Therefore, you’re making money. Everyone is happy.

But there’s a problem. Your strategic approach only measures oil production.

Does the well produce oil? How much does it cost to run the well?

These simple questions yield an impressive net sum. But discovering other influential data factors is laborious. Your perspective is hardly comprehensive. Some of your reports are skewed and result in false data.

Thankfully, the oil well makes money despite your poor analytics. But that’s no way to scale or compete in the long-term.

Last year, we interviewed one of the brightest minds in predictive analytics. Ramesh Menon, Vice President of Product at Infoworks.io, explained how his company deploys their Autonomous Data Engine product across a large oil and natural gas company.

As you might guess, Menon’s perspective on oil drilling management denounced simple approaches. Instead, his product aims to ingest all metrics and create comprehensive insights. The result is oil drilling management that’s informed and flexible. Predictive analytics allow the oil well to improve real-time drilling analytics.

Infoworks allows oil well management teams to view robust data through the same narrow optics. Instead of relating oil to profit, management realizes hundreds of profit influencers. They can all be leveraged to increase revenue.

We don’t plan to drill 5,000 feet below the earth’s surface. But that doesn’t mean we can’t learn a lesson from the application of predictive analytics on oil wells.

Poor Web Analytics Is a Losing Proposition

We don’t need the Autonomous Data Engine. Websites are not oil wells.

If you want to compete on the web, you must leverage analytics tools. That means data-driven web analytics via Google Analytics.

If you operate at a 100,000-foot view, you expose yourself to a competitor’s marketing campaign that’s using data to predict consumer behavior. Or competition that utilizes data to improve CRO.

A poor analytics strategy can cost websites their rankings, clicks, revenue, returning visitors, leads, and brand reach.

You can find the worst cases of poor analytics on highly profitable websites. When our 100,000-foot perspective shows a profit, we often disregard shoddy analytics.

But we’re leaving money on the table. And that needs to change.

What Is the 100,000-Foot Google Analytics View?

If you’re just looking at traffic and the source of that traffic, you’re missing critical data. The Google Analytics dashboard remains a convenient way to digest fast data. But it isn’t an appropriate perspective for scaling and implementing change.

Yet, that’s how most people apply actionable insights. They rely only on acquisitions. The more, the merrier.

If the traffic is low, the chase for why ensues. If the traffic is high, it’s time to kick back and bask in our glory.

The mistake is simple data relationships. Big, broad numbers drive our reactions. In this case, we are making poor decisions based on customer data.

There is no master list of Google Analytics stats that act as essential markers. The relationship between stats and site changes or site health or consumer happiness is always unique.

The Value of a Company Is Found in Data

Six years ago, we decided to make a change. We wanted to improve a lead capture form. We wanted more consumer leads.

Our first thought directed our attention to our current lead capture form. It was a simple slide-in form that appeared at the bottom of the site’s articles. The form had a simple call to action – “subscribe today and stay current.”

Our issue was that we needed more leads. That’s not to say that we weren’t getting enough leads. The problem wasn’t a lead conversion issue, because we’d never checked. We only looked at the total leads and how they related to overall traffic. The more traffic, the more incoming leads. Our leads were tiny barrels of oil. They made money. So, we needed more of them.

We decided to deploy a more aggressive tactic. Therefore, we created an overlay lead capture form.

The color scheme of the new lead capture form was up for revision. The old version, the one that had served us well, was an ugly grey. We wanted to serve up happiness, so after a fair amount of invested time, we ran with sunny yellow.

To do so, we’d need more than a simple call to action. We wrote out an enticing header and flanked it with bullet points.

We made the pop-up a big, not to be missed, event.

After only a few days, we noticed that leads were down. But so was traffic. Our data perspective was that our leads were related to traffic, so we understood the issue.

We decided that we needed more traffic to support the improved lead capture experience. So we pushed for a heavier influx of traffic.

But the leads hardly improved.

How could that be?

The problem was that our data perspective was too simple: two compelling data sets at work: traffic and leads.

As focused as we were on a new lead capture strategy, yet, we had no strategy at all. We chose a lead capture style and color with reckless abandon. We wrote more sales text because more felt better.

If you had asked us then, we’d have told you we were data experts. We knew that leads and traffic were related. We knew leads created revenue.

But there were other metrics at play

For one, the time on site declined drastically. A deeper dive revealed that the aggressive lead capture form caused both less time on the site and increased bounce rates for mobile users.

The new lead capture form contributed to a severe site load time reduction. My site was loading slower, particularly on mobile, causing people to bounce. If your site’s load time drags, your site’s health collapses. You can refer to our website load time guide for more information on that.

If we’d understood the importance of deeper metrics such as bounce rate and time on site, we could have diagnosed the issue.

But data influencing reduced lead capture was hardly summarized by those two metrics.

It turned out that the more robust text reduced leads as well. We learned that after A/B testing. Our original call-to-action, which was shorter, enticed more lead submissions. If we’d A/B tested originally, we would have known that.

Erroneous interpretations of data aren’t just inconvenient, they’re costly. According to Gartner research, poor data quality costs companies almost $10 million annually. The reason, as explained by the Harvard Business Review, is that poor data causes managers and marketers to make bad decisions.

Our egos encourage us to operate with rogue strategy. But effective marketing strategy builds on influential data.

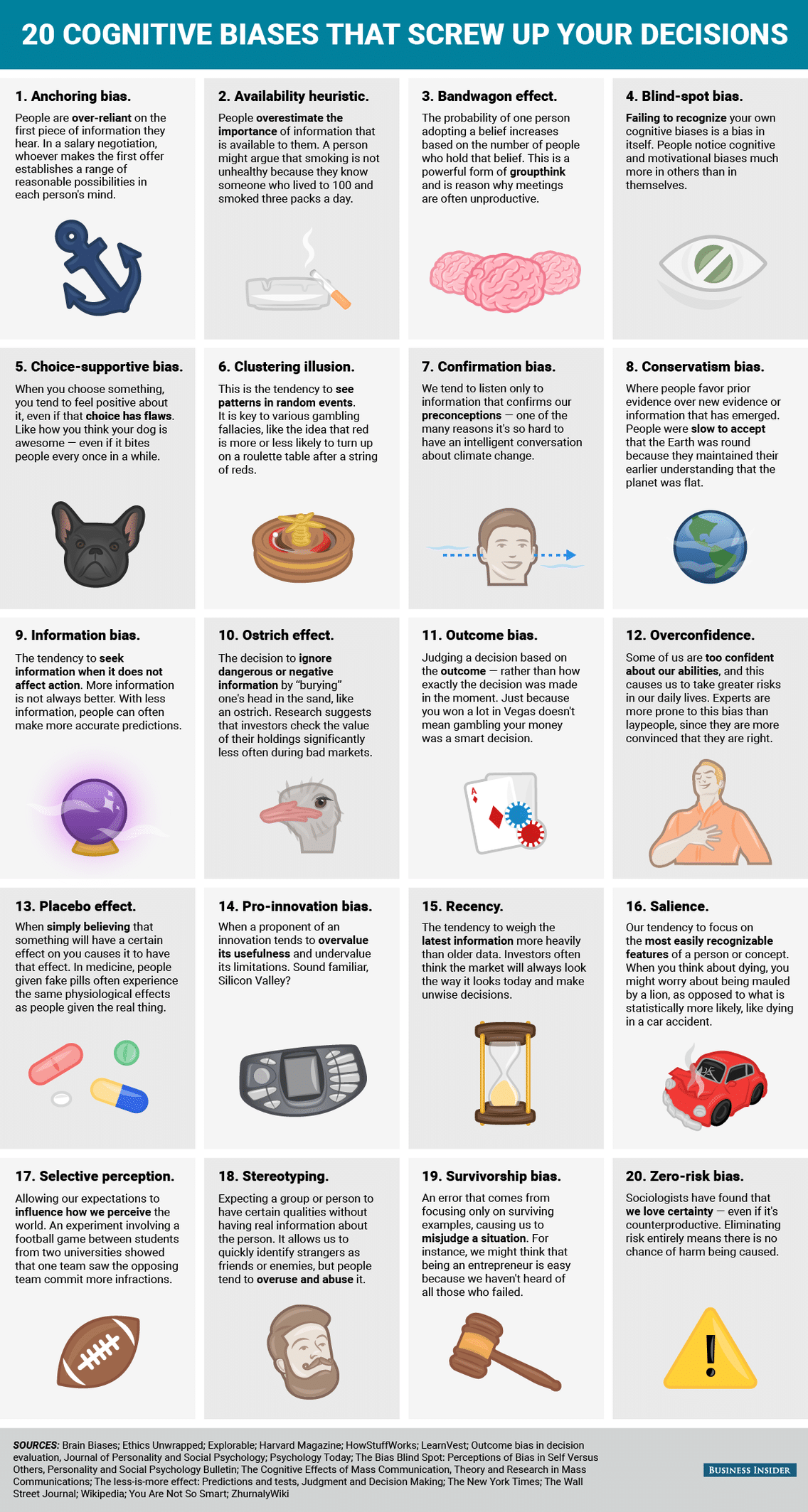

Don’t think your ego gets in the way of good decision making? Check out this graphic from Business Insider’s 20 cognitive biases that screw up your decisions.

Our decision-making process is overwhelmed with influencers. The only way to turn down the volume on bad influencers is to leverage analytics. Analytics don’t lie, but our intuition does.

Not only that, but companies that properly use analytics make faster decisions.

Better and faster decisions; what more could you ask for?

The problem now is how do we rethink the game?

How You Should Approach Analytics

By now, the solution to what ails you feels obvious. That’s because it is.

You need to start running a data-driven environment. That’s easier said than done. However, such a philosophical change improves almost every facet of your business acumen.

Analytics enhances almost every facet of business, but only when we use them.

You must make a philosophical change to the way you approach change. From now on, you make no changes to any part of your site or customer experience without fully delving into the data. You must determine which metrics matter and what direction you want the needle to move on those metrics.

Establish Analytics Thresholds

In our earlier example, we related more traffic to more leads. This led us to use an underperforming lead capture pop-up for months. In that example, influential analytics such as time on site, bounce rates, and site load times, reflected an issue.

If we had established those metrics as barometers of failure or success, we would not have wasted so much time. Ultimately, we lost leads.

A/B Test Everything

You hear it all the time. You should A/B test changes. But you don’t do it. You’re too busy relying on intuition.

From now on, you don’t decide which colors or what text works, you let the consumer do that for you. Think of A/B testing as a conversation with a consumer that reveals what they actually want from you.

In order to change in such a philosophical manner, you are going to need to put your ego aside. We all have some ego; it’s a healthy thing. But we can’t allow ourselves to interrupt our path to success. Making changes without A/B testing impedes progress.

So, from now on, you’ll establish analytics thresholds for changes and A/B test every change.

Why Your Analytics Deceives You

For some of you, the first part of this article doesn’t apply to you. You love data and analysis. It guides you in your decision making. You don’t ignore statistics in lieu of intuition.

But still, your growth suffers nonetheless.

So what gives?

Bad analysis plagues your strategy.

It’s everywhere, but you don’t realize it.

Ways to measure analytics surround us. They save us from peril.

When you grill, you might use a meat thermometer. Expose the meat to too much heat, it dries out and becomes chewy. Expose the meat to too little heat, and you risk serving your guest a bacterial infection.

This is why you use a meat thermometer. It analyzes the temperature of meat. You can instantly learn that your pork chops are 165 degrees. You understand that 165 degrees kill dangerous bacteria.

Your pork chops at 165 degrees means they are cooked at the lowest possible temperature with the lowest possible risk for bacteria.

You used analytics to create an amazing tasting dinner, free of risk from illness

But what if the meat thermometer you use is compromised? It doesn’t accurately measure temperature, causing a faulty analysis. This means your meat turns out dry and inedible, or undercooked.

You’d probably stop using the meat thermometer, right?

Just like with cooking, sites rely on correct analytics. When analytics break down, we begin making unjustified decisions. Or we make no decisions at all. Poor data means we miss opportunities and underdiagnose. We risk site reputation and health.

But how do cases of poor analytics happen? Isn’t Google Analytics pretty straightforward? Shouldn’t you know if your site is loading slow?

These things aren’t that simple.

Poor analytics can be the result of incorrect setups and inaccurate interpretations. Let’s have a look at several possible causes of poor analytics.

Your Google Analytics Is Poorly Installed

Poorly installed Google Analytics code is more prevalent than you might think.

Often, a site owner inadvertently fails to apply analytics tracking code on multiple pages. Websites that update frequently can blossom into massive sites that contain thousands of pages. When the tracking code isn’t on any of these pages, you lose data.

We suggest verifying your Google Analytics install. You might feel confident that your WordPress plugin has you covered. You might be surprised to learn differently.

Use W3C Link Checker or Web Link Validator to validate tracking code across every page on your site. We would also encourage everyone to implement Google Tag Manager. This allows you to install and validate tracking codes site-wide. Check out some of our free Google Tag Manager Containers for download here.

You’re Using a CDN Like Cloudflare

You’ve changed your DNS to direct through Cloudflare. You were hoping to increase load times. But after running a GTMetrix site load scan, you feel pretty let down by poor results.

Or, you can’t understand why your Cloudflare traffic is higher than your Google Analytics traffic.

You’re using a CDN; it will create diversity in some statistical areas.

Because you proxy through the CDN, your load times may fluctuate considerably. But this isn’t the entire story. While your GTMetrix scan may be slow, it doesn’t mean the site is slow. It’s important to flush the Cloudflare cache to gain more accurate results.

Additionally, GTMetrix is a tool that isn’t often telling the entire story. It relies on the location of the test, and it uses a variety of testing variables. It’s important to use Pingdom and Google Speed Insights as well. But more important than all of that is to understand what your load times are at all times. Remember, data-driven decisions mean understanding your baseline.

Cloudflare will track all sessions, whereas Google Analytics should filter down sessions. Google Analytics filters out bots, Cloudflare does not. Cloudflare will miss any traffic that doesn’t go through its proxy.

Use Google Analytics page speed numbers to gain clarity on historical and current load times. This will establish your baseline.

Your Data Settings Aren’t What You Think

Google Analytics data filters permanently alter the overall analytics view. Unlike segments, view filters permanently remove data. Think of Google Analytics segments as short term data displays and data filters as a global permanent change.

The most common Google Analytics filter involves the exclusion of internal traffic sources. You probably don’t need to see your own clicks. This filter creates cleaner data by filtering out your home and/or office IP address.

Often, a sloppy digital marketing agency or some shoddy consultant messed with filter settings. Or, you read some article encouraging you to modify settings but later forgot you made the change.

In either case, your global filters are permanently changed until you revert them back to default.

Double-checking your view filter settings is important. Look for odd filters. You can learn more about view filters from Google.

You’re Overwhelmed With Referral Spam

A spike in traffic is nice. Learning that this increase is the result of spam is a bummer.

Referral spam populates your Google Analytics account with fake referrers. Initially, this appears as increased traffic, but ultimately it’s junk.

Spambots alter your analytics and cause you to become misinformed. They can also spike bounce rates which potentially skew data from other traffic sources. Not to mention the duress a server might come under as more and more spam website referrals tax the processor and memory.

Referral spam is a two-part problem

The first is inflated statistics. The second is spammy referral traffic that needs culling.

When referral spam runs high in your Google Analytics account, you have a lack of visibility. It deceives by making the site appear to receive more traffic than it actually does. It conceals by covering up authentic traffic successes.

Referral traffic is a great indication of a site’s brand recognition, search engine health, social media following, and return visitors. You can’t make data-driven decisions when spam referrals convolute your view.

Referral spam can be tough to spot. In some cases, the referring URLs appear legit, adding to the frustration.

So here’s what you can do to clean your data.

- In Google Analytics, go to Acquisition > All Traffic > Channels.

- On Secondary Dimension, click on Sources/Medium.

- Now, Add Segment.

- Click New Segment.

- Click Conditions.

- Now click Behavior, then Hostname.

- Now, using the Dropdown to the right of Hostname, select Matches Regex.

- Add the following:

offer|free-|share|mercedes|buy|cheap|googlsucks|benz|sl500|hulfington|buttons|darodar|pistonheads|motor|money|blackhat|backlink|webrank|seo|phd|crawler|anonymous|\d{3}.*forum|porn|webmaster|flipboard|fl.ru|mbca|ahrefs|game|.io|^sex|^video

(we got this trick from Brian Clifton’s website. It should help you eliminate a lot of referrer spam.)

- Now, on the bottom right, hit that OR button.

- Now, courtesy of Neil Patel, paste the following filter:

dailyrank|100dollars-seo|anticrawler|sitevaluation|buttons-for-website|buttons-for-your-website|-musicas*-gratis|best-seo-offer|best-seo-solution|savetubevideo|ranksonic|offers.bycontext|7makemoneyonline|kambasoft|medispainstitute

- Again, hit OR, put this in:

127.0.0.1|justprofit.xyz|nexus.search-helper.ru|rankings-analytics.com|videos-for-your-business|adviceforum.info|video—production|success-seo|sharemyfile.ru|seo-platform|dbutton.net|wordpress-crew.net|rankscanner|doktoronline.no|o00.in

- Once again, hit OR, paste this:

top1-seo-service.com|fast-wordpress-start.com|rankings-analytics.com|uptimebot.net|^scripted.com|uptimechecker.com

If you want to learn a lot more about how to deal with referral spam in your Google Analytics, check out Neil Patel’s full article.

Your Campaigns Aren’t Tagged

A primary reason we use Google Analytics is that it tells us where our traffic originates. That’s obvious. It should be equally apparent that when Google Analytics fails to display traffic’s origin, we operate blindly.

While some cases of mystery traffic are par for the course, much of these instances can be solved with proper campaign tagging.

If you aren’t tagging your campaigns properly, you’re losing vital information.

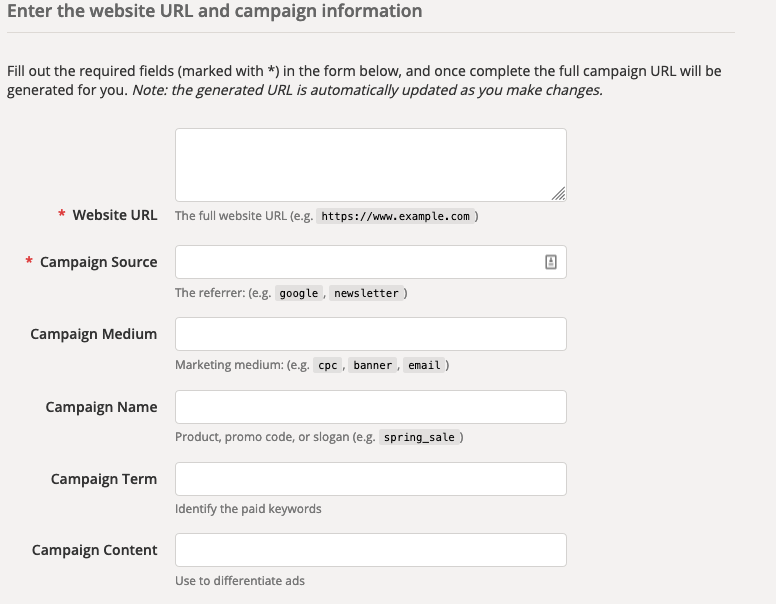

To properly tag your campaigns, apply UTM parameters. They are simple to add and enable Google Analytics to track the medium, source, campaign, type of content, and term of a single URL.

Use Google’s Campaign URL Builder and easily create these tags.

Here’s a screencap that shows how easy the campaign builder is to use.

The result will be a URL that looks something like this: https://directom.com/?utm_source=signature&utm_medium=email

Clearly, this depends on how you fill in the information into the builder. But you get the idea.

If you see a lot of mystery traffic, check old URLs for proper tagging.

You Duplicated Your Google Analytics Tags

Your site experiences a sudden spike in traffic. But something seems off. When you look through your Analytics account, you can’t find a good reason for the increase in traffic.

It is possible you’ve duplicated your Google Analytics tracking code. This happens more often than you think. Because we tend to use plugins to install tracking codes, occasionally, those scripts may duplicate installs.

This often happens when plugins or sites update.

Although it feels great to see increased traffic, it is crucial to make sure the traffic is valid.

You Aren’t Tracking Users Across Subdomains

If you use subdomains on your website, you might experience issues with Google Analytics. That’s because each subdomain is a unique domain experience.

If you think this might be the case for you, check out this post on this post on how to track users across subdomains.

Conclusion

You understand the importance of being a data-driven executive.

Data is the fundamental influencer of the most important decisions. Without data, we fly blind.

The only thing worse than making decisions while ignoring data is making decisions using invalid data. It’s important to audit the integrity of your Google Analytics data on a frequent basis. It takes little effort, yet yields big results.

Want to be able to use your data to make better decisions to help grow your business? Learn more about our online marketing agency and expert marketing analytics services here.

To get more information on this topic, contact us today for a free consultation or learn more about our status as a Google Premier Partner before you reach out.